Previously I wrote about events using RabbitMQ and Kafka, this time I take a similar approach but using the Message Broker feature of a different tool called: Redis.

What is Redis?

According to their website, Redis is (emphasis mine):

is an open source (BSD licensed), in-memory data structure store, used as a database, cache, and message broker.

Redis works really well under heavy load, I’ve been using it for more than a five years already and it never disappoints, it provides different features that could be relevant to the problems you’re trying to solve.

This time I’ll describe using the Pub/Sub support for messaging between different services.

What is Pub/Sub?

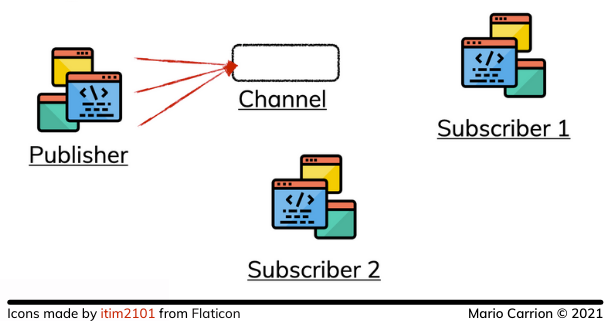

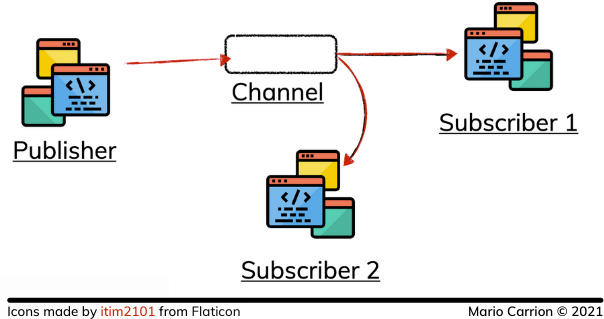

Pub/Sub is a messaging paradigm that consists of defining Publishers and Subscribers which Channels in between them, where Publishers act as “message senders” and Subscribers act as “message receivers”. The Publishers do not send messages directly to Subscribers but rather to Channels; those Channels act as an intermediary between Publishers and Subscribers, the idea is to have those Subscribers to only receive the messages they are interested to, and having them decoupled from the Publishers.

Redis supports this paradigm and defines different commands for publish, subscribing and unsubscribing; it even supports pattern-matching subscriptions.

One really important thing about the Redis implementation is that the channel doesn’t store any message, if there are no subscribes the messages are sent to nobody, therefore they are lost:

But, as expected, if subscribers are present then the messages are sent to the clients listening for those values:

Publisher implementation using a Repository

The code used for this post is available on Github.

For Go, I recommend using the go-redis/redis package because it allows you to access Redis in a type-safe way.

Our redis.Task type is the repository in charge of publishing the events to different channels, the code is similar to what we did in the past:

func (t *Task) publish(ctx context.Context, spanName, channel string, e interface{}) error {

var b bytes.Buffer

if err := json.NewEncoder(&b).Encode(e); err != nil {

return internal.WrapErrorf(err, internal.ErrorCodeUnknown, "json.Encode")

}

res := t.client.Publish(ctx, channel, b.Bytes())

if err := res.Err(); err != nil {

return internal.WrapErrorf(err, internal.ErrorCodeUnknown, "client.Publish")

}

return nil

}

The biggest difference is that in this case each message type is using their down channel, this will be much more clearer when we look at the subscriber.

Subscriber implementation

Similarly to what I did in the past, I had to build a new service in charge of receiving those events to update the Elasticsearch records. The key part of this new service is the usage of pattern-matching for subscribing to multiple events:

func (s *Server) ListenAndServe() error {

pubsub := s.rdb.PSubscribe(context.Background(), "tasks.*") // Pattern-matching subscription

_, _ = pubsub.Receive(context.Background()) // XXX: error checking omitted for brevity

s.pubsub = pubsub

go func() {

for msg := range pubsub.Channel() {

switch msg.Channel {

case "tasks.event.updated", "tasks.event.created":

// ...

case "tasks.event.deleted":

// ...

}

}

// ...

}()

return nil

}

With both pieces in place every time a customer interacts with our REST API a message will be published and then consume by the subscriber that in the end will update the ElasticSearch records.

Conclusion

Using Pub/Sub with Redis is a simple way to implement messaging between different services; however compared to similar tools, like Kafka or RabbitMQ, we need to be aware that those messages are not stored in Redis, not even temporarily, so if there are no Subscribers already listening for those messages then all the published messages will be lost, so depending on the use case this perhaps not the best idea.

Think well about the problem you’re trying to solve, maybe Redis is the lightweight solution you should be using.

Talk to you later.

Recommended Reading

If you’re looking to do something similar in RabbitMQ and Kafka, I recommend reading the following links:

- Microservices in Go: Events and Background jobs using RabbitMQ

- Microservices in Go: Events Streaming using Kafka